Dext Framework: Reaching Maximum Performance with Zero-Alloc Pipeline

📌 Overview

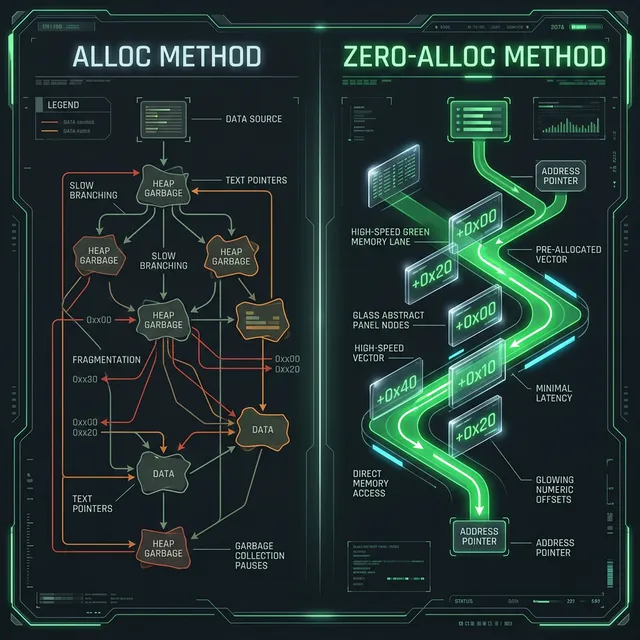

Section titled “📌 Overview”In the development of high-performance APIs and Microservices, the biggest bottleneck is often not the raw processing, but Memory Management. In traditional Delphi, the excessive use of basic classes (TList<T>, TStrings, TDictionary) throughout the lifecycle of an HTTP request punishes the Heap with short-lived allocations, generating fragmentation and gradual throughput degradation.

The Dext Framework has successfully implemented and merged the Zero-Allocation Pipeline feature. This tactical engineering milestone redesigns the internal ecosystem so that critical operations (Routing, Middlewares, ORM, and JSON Parsing) operate with zero allocation on the Heap in hot execution paths (Hot-paths).

⏪ Nostalgia: From “Old-School” Pointers to Typed Safety

Section titled “⏪ Nostalgia: From “Old-School” Pointers to Typed Safety”Many old-school Delphi developers (from the Delphi 7 era) remember that to squeeze the last drop of performance out of a server, the recipe was to flee from object-orientation and fall back to Direct Byte Management: massive use of Pointers, PByte, Absolute, and Records passed by reference (var/const).

This code was ultra-fast, but had two major problems:

- Extreme Danger: The compiler “turned a blind eye”. A wrong pointer increment and you corrupted the application memory (Access Violation).

- Heavy Maintenance: Screens filled with pointer arithmetic scared new programmers on the team.

Microsoft’s .NET solved this pain by introducing the concept of Span<T>. The Dext Framework brought this exact philosophy to Delphi.

The TSpan<T> and TVector<T> from Dext.Collections are, in fact, the old and powerful Delphi 7 pointers wrapped in a generic and strongly typed shell. The compiler knows what is there, loops are safe, but internally, you are taking advantage of the speed of light.

🛠️ The Anatomy of Zero-Alloc: How It Works?

Section titled “🛠️ The Anatomy of Zero-Alloc: How It Works?”1. Web Pipeline & Stack Routing

Section titled “1. Web Pipeline & Stack Routing”Previously, splitting a URL like /api/v1/users/50 created multiple string arrays and temporary structs.

The Cure: Dext now uses TByteSpan (Memory Spans) and short allocations via TVector<T> instantiated directly on the Stack. The result is ultra-fast routing, whose data automatically expires upon closing the call scope without costing 1 byte of Heap allocation.

2. ORM Specification & Constraints Without Inflating the Heap

Section titled “2. ORM Specification & Constraints Without Inflating the Heap”With every .Where(), .Sort(), or .Include() added to the ORM’s fluent API to generate dynamic queries, tree structures and intermediate listings inflated the RAM.

The Cure: Short Stack-based arrays (TVector<T>) now organize each node of the constraint until compilation into the dialect (final SQL), cheapening the cost of the fluent syntax.

3. Extreme JSON Model Binding (Direct Memory Inject)

Section titled “3. Extreme JSON Model Binding (Direct Memory Inject)”

Traditional JSON reading involves RTTI decoding, dynamic setters, and dictionary trees. For arrays of thousands of records, this is deadly.

The Technological Leap:

Dext now skips setters and reads the physical offsets of properties in classes (Prototype.Entity<T>). At parsing time, the framework writes the bytes directly to the Field’s memory address:

PInteger(PByte(Obj) + Offset)^ := Value; // Zero alloc, zero RTTI overhead!4. HTTP Request Lazy-Views

Section titled “4. HTTP Request Lazy-Views”Request properties like GetQuery, GetHeaders, and GetCookies commonly create Dictionaries or TStringList on every HTTP request just by existing in the struct — even if the controller programmer never reads the headers.

The Cure: WebHost mappings (whether Indy, WebBroker, Delphi Cross Sockets, etc.) now use View-Structs that only instantiate and process on a Lazy-Loading basis, ensuring the HTTP Server is virtually grease-free.

📈 High Performance Benchmarks

Section titled “📈 High Performance Benchmarks”Below are the benchmarks recorded and consolidated after merging the feature. They prove that nano-second gains accumulate monster throughput en masse.

| Execution Scope | Test Case | Iterations | Accumulated Time | Allocations |

|---|---|---|---|---|

| Routing Engine | 1. Literal Match (GET /api/v1/resource50) | 10,000 | 49.44 ms | 0 |

2. Pattern Match (POST /api/users/99/orders/Abc123XYz) | 10,000 | 47.49 ms | 0 | |

| Middleware Pipeline | 1. Full Chain Execution | 10,000 | 0.62 ms | 0 |

| ORM Specification | 1. Expression Tree Building (3 conditions) | 100,000 | 237.27 ms | 0 |

| 2. Constraint (Where, Include, Select, Paging) | 100,000 | 122.50 ms | 0 | |

| JSON Deserialization | 1. JSON back to Record | 50,000 | 117.67 ms | 0 |

| HTTP Request Request | 1. Static/Pre-Instantiated (Old) | 50,000 | 113.47 ms | Allocated |

| 2. Lazy Loading / Smart Vector (New) | 50,000 | 3.99 ms | 0 |

🏆 Market Advantages

Section titled “🏆 Market Advantages”- Load Stability: Web Server memory usage becomes fluctuating rather than ramping up, preventing dreaded crashes due to RAM bottlenecks under stress.

- Deterministic Latency: Without the manager needing to sweep the Heap to free small string blobs, call response times become highly predictable.

- Use of

TVector<T>: In Dext, we prioritize Stack Inline Arrays packed by our own native technology, putting the ecosystem at the same level of optimization as modern .NET Core web frameworks.

🎯 Conclusion: Dext Framework is not just about what it does, but how it does it. The Zero-Alloc Pipeline positions the Delphi backend as a low-latency corporate titan.

Article prepared by the Dext Framework engineering team.

🔗 Useful Links

Section titled “🔗 Useful Links”- 🌐 Dext Framework Project: Access the Initiative and Features (Don’t forget to leave a star ⭐ on the repository!)

- 📖 Dext Book (Official Documentation): Access the Dext Book Repository with Knowledge Base

- 📚 Delphi Multithreading Book: Meet the definitive guide on concurrency and performance (Portuguese/English)