How We Optimized Delphi Collections and Sped Up Generic Build by 60%

Introduction

Section titled “Introduction”Every Delphi developer knows the cost of Generics.Collections. They completely changed the game when introduced, but they also brought a mostly invisible “tax”: Code Bloat and compile times that test our patience daily. At Project Dext, we decided we needed something faster, smarter, and above all, more productive.

The Big Win: Productivity and “Code Folding” ⚡

Section titled “The Big Win: Productivity and “Code Folding” ⚡”The biggest productivity villain in large Delphi projects is the idle time spent waiting for the compiler to finish its job. At the beginning of the process with our large-scale Dext projects baseline, a full build took about 8 minutes and 36 seconds.

The core problem is the compilation architecture of Generics: the Delphi compiler needs to generate the binary code separately and in its entirety for each instantiated generic specialization. In a modern backend stress test of Dext, we faced simultaneous streams of:

- 500 specializations of IList

- 1.500 specializations of TDictionary<K,V>

- Total: 2,000 complete specializations duplicating generated code

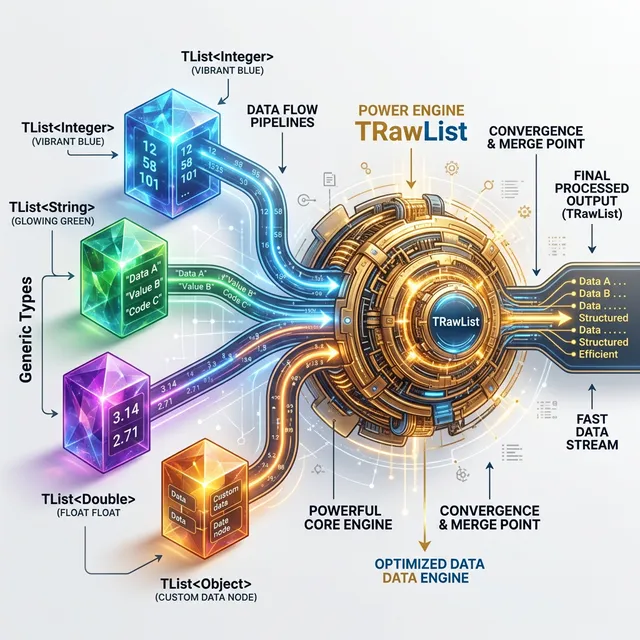

How did we solve this? By implementing the Binary Code Folding pattern, where those hundreds of lists and collections share a single central engine (TRawList), which was modeled to silently and safely operate over raw memory slices.

This collapsed our compile time colossally. Even after we added other aggressive runtime-focused layers (which cost about an extra 1 minute from the compiler), this huge project stabilized at a final build of just 3 minutes and 36 seconds.

The Verdict: We went from almost 9 minutes to a mere 3.5 minutes of waiting. It’s more than double the long-term daily productivity and a relief to the dev team, without giving up a blazingly fast sniper binary.

Architecture: The Performance Sniper 🎯

Section titled “Architecture: The Performance Sniper 🎯”

Reaching peak fluidity requires redoing things that no longer scale. We adopted three major engineering pillars in the dozens of internal search and indexing implementations of the Collections:

- Specialized Recursion: We removed

casestatements and bifurcations from the inner loops of sorting algorithms. The engine establishes direct processing trails forInteger,Float, andString. The processor now focuses on the finish line, without costly decisions along the way. - Hybrid Sort (QuickSort + Insertion Sort): When the list falls into a small stratum of slices and blocks, we aggressively abandon the heavy recursion of

QuickSortand delegate it to CPU-cache-friendly algorithms likeInsertion Sort, which focus linearly. - Flags Mirroring: Dext brilliantly reshapes the behavior of vital flags: the upper

TList<T>wrapper carries mirror attributes, preventing interactions and loops from constantly asking for theTypeInfo()of managed objects, saving vital brutal cycles.

The Decisive Stage: Dext vs RTL 📊

Section titled “The Decisive Stage: Dext vs RTL 📊”With the engines tuned, our tests prove the victories. These benchmarks are run strictly in Single-Thread and completely isolated processes (no interruptions like antivirus and aggressive background tasks).

Primitive Types (Pure Speed)

Section titled “Primitive Types (Pure Speed)”| Operation | Scenario | RTL (ms) | Dext (ms) | Ratio % |

|---|---|---|---|---|

| List Sort | 10k Integers | 0.54ms | 0.07ms | 14.6% (6.8x Faster!) |

| IndexOf | 100 Integers | 0.008ms | 0.0002ms | 2.4% (42x Faster!) |

| Dictionary Lookup | 100k Items | 6.61ms | 1.00ms | 15.2% (6.6x Faster!) |

| List Add | 100k Integers | 0.61ms | 0.26ms | 43.2% (2.3x Faster!) |

Managed Types and Objects (Efficiency with Safety)

Section titled “Managed Types and Objects (Efficiency with Safety)”Working with circular references or strings adds a severe Reference Counting load. Still handling this, Dext kept an impeccable breath:

| Operation | Scenario | RTL (ms) | Dext (ms) | Ratio % |

|---|---|---|---|---|

| List Add | 100k Objects | 5.95ms | 4.42ms | 74.3% (Faster!) |

| List Sort | 100k Strings | 33.29ms | 28.30ms | 85.0% (Faster!) |

| Iteration (for-in) | 10k Strings | 0.16ms | 0.14ms | 88.1% (Faster!) |

| Add/Populate | 100k Strings | 9.81ms | 9.17ms | 93.4% (Faster!) |

[!TIP] In vital scenarios within Strings and heavy processing of managed memory references (where the cost of the Windows/OS architecture prevails), the RTL drops due to overall overhead. Dext proves that it also prevails precisely and in short time in managed collections.

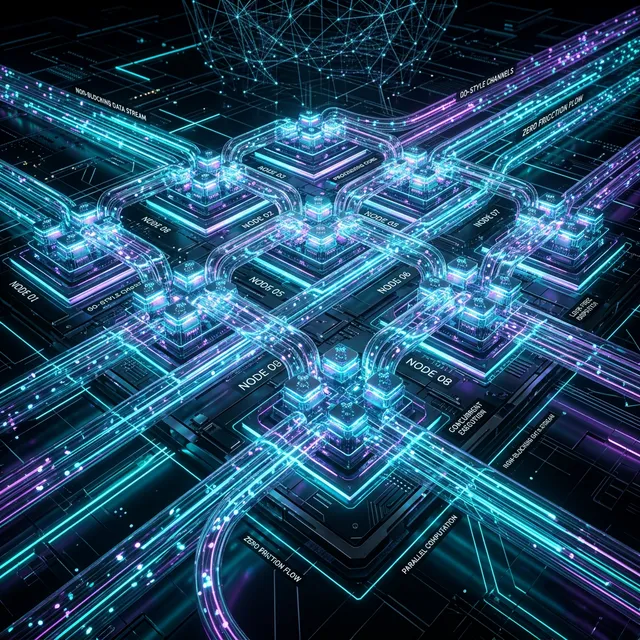

Modern Concurrency: Channels and Lock-Free Operations 🚀

Section titled “Modern Concurrency: Channels and Lock-Free Operations 🚀”Robust foundations need to distribute loads across multiple processes without massive pain or friction. Most libraries in the collections environment rely heavily on blocking implementations via TCriticalSection or archaic wrappers through TMonitor. The biggest problem? In powerful multi-core backend servers, this scenario turns into friction that aggressively restricts the actual scaled throughput.

At Dext, we took the parallel foundation to an entirely new status. We natively took inspiration from the Go (Golang) language by adopting transmission Channels (Channels) and strict and powerful implementations of collections guided without interruptions by manual locking (Lock-Free).

High-Yield Channels (IChannel<T>)

Section titled “High-Yield Channels (IChannel<T>)”You can accelerate the isolated processing rate, but clogging queues retains global performance. IChannel<T> provides a superior structure without the locks:

- Zero Lock Contention: Transparent fluidity between producers of orders/actions and the pool responsible for consuming data.

- Native Backpressure out-of-the-box: Base-constrained buffer permissions (

Bounded Channels) hold back the force of uncoordinated traffic, preventing very fast producer threads from congesting the server and uncontrollably flooding the infrastructure. - Organic and Linear Design: Asynchronous code that looks very familiar to a linear one.

var Chan: IChannel<TOrder>;begin Chan := TChannel<TOrder>.CreateBounded(100);

// Producer Thread TTask.Run(procedure begin while Processing do Chan.Write(ProduceOrder); end);

// Consumer Thread (No manual locks, no headaches!) TTask.Run(procedure begin while Chan.IsOpen do ProcessOrder(Chan.Read); end);end;Lock-based approaches don’t scale progressively (from 4 clusters or concurrent requests, they often suffocate in Windows Memory Manager queue stops). Meanwhile, Channels within the Dext ecosystem progress organically, keeping profitable lines in server-side environments and scalable hardware.

Absolute Stability: 140 Unit Tests 🛡️

Section titled “Absolute Stability: 140 Unit Tests 🛡️”Proving promises is complex; testing and maintaining them under constant checks is more realistic. Dext’s new core Collections code base has already started with an unshakeable delivery format:

- 140 automated tests hooked up and passing 100% of the daily flows.

- Strict and extreme continuous processing on Memory Leaks over the memory and manageable objects lifecycles containing limited scopes.

- Global integration and

Channelscertifications with zero transit failures in Concurrency.

Fluent Expressiveness: Transparent Performance 🧩

Section titled “Fluent Expressiveness: Transparent Performance 🧩”Maximum RTL performance has always seemed to demand rustic coding on screens or raw manual manipulation (with dangerous pointers and confusing loops). But our vision values expressive code, as we are on a highly advanced compile-time runtime like Prototype.Entity in Dext; where fluent syntax has an unbeatable return in generated precision:

var Clients: IList<TClient>;begin var c := Prototype.Entity<TClient>;

// Dext Fluent Syntax: Direct reading for the eyes, sniper compiled for the machine Clients := Customers .Where(c.Balance > 1000000) .Sort(c.Balance.Asc) .ToList;end;Conclusion

Section titled “Conclusion”The total rebuild of Dext.Collections has proven to be the right path and unequivocally demonstrates the technical and architectural viability and the improvement that mature frameworks must reach when migrating vital internal components and replacing the comfortable and slow legacy ecosystem with an incisive disruptive one. We had to process with builds merely 1 minute slower in global compilation runtime against our internal initial v1 to reach a revolution of hundreds of macros and delivery gain.

In the end, we delivered backend engineering that will allow your application to swallow huge simultaneous queues without blocking.

This native collection implementation I showcased here and their expressiveness are just the beginning. We will start fully adopting the extensions of this infrastructure throughout the Dext platform and introduce more surgical improvements focused on manipulation and scalability bottlenecks wherever viable, making our massive yields better and better.

Ready to start coding outside the contentions and locks of the base RTL? Accelerate with Dext. 🏁🚀

🔗 Useful Links

Section titled “🔗 Useful Links”- 🌐 Project Dext Framework: Visit the Initiative and Features (Don’t forget to leave a star ⭐ on the repository!)

- 📖 Dext Book (Official Docs): Access the Documentation Repository (Dext Book)

- 📚 Delphi Multithreading Book: Discover the definitive guide on concurrency and performance (Portuguese/English)